[Added August 27, 2014: GiveWell Labs is now known as the Open Philanthropy Project.]

Previously, we wrote about the need to trade off time spent on (a) our charities that meet our traditional criteria vs. (b) broadening our research to include new causes (the work we’ve been referring to as GiveWell Labs). This post goes into more detail on the considerations in favor of assigning resources to each, and lays out our working plan for 2013.

- Most importantly, we would guess that the best giving opportunities are likely to lie outside of our traditional work, and our mission and passion is to find the best giving opportunities we can.Our traditional criteria apply only to a very small subset of possible giving opportunities, and it’s a subset that doesn’t seem uniquely difficult to find funders for. (While there are certainly causes that are easier to raise money for than global health, it’s also the case that governments and large multilateral donors such as GFATM put large amounts of money into the interventions we consider most proven and cost-effective, including vaccines – hence our inability to find un-funded opportunities in this space – as well as bednets, cash transfers and deworming.) While we do believe that being able to measure something is a major plus holding all else equal – and that it’s particularly important for casual donors – we no longer consider ourselves to be “casual,” and we would guess that opening ourselves up to the full set of things a funder can do will eventually lead to substantially better giving opportunities than the ones we’ve considered so far.

- We believe that we are hitting diminishing returns on our traditional research. We have been fairly thorough in identifying the most evidence-supported interventions and looking for the groups working on them, and we believe it’s unlikely that there are other existing charities that fit our criteria as well as or better than our current top charities.We have previously alluded to such diminishing returns and now feel more strongly about them. We put a great deal of work into our traditional research in 2012, both on finding more charities working on proven cost-effective interventions (nutrition interventions and immunizations) and on more deeply understanding our existing top charities (see Revisiting the case for insecticide-treated nets, Insecticide resistance and malaria control, Revisiting the case for developmental effects of deworming, New Cochrane review of the Effectiveness of Deworming). Yet none of this work changed anything about our bottom-line recommendations; the only change to our top charities came because of the emergence/maturation of a new group (GiveDirectly).

Putting in so much work without coming to new recommendations (or even finding a promising path to doing so) provides, in our view, a strong sign that we have not been using our resources as efficiently as possible for the goal of finding the best giving opportunities possible. We believe that substantially broadening our scope is the change most likely to improve the situation.

- GiveWell Labs also has advantages from a marketing perspective – improving our chances of attracting major donors – as discussed previously.

We also see major considerations in favor of maintaining a high level of quality for our more traditional work:

- GiveWell Labs is still experimental, and we haven’t established that this work can identify outstanding giving opportunities or that there would be broad demand for the recommendations derived from such work. By contrast, we have strong reason to believe that we do our traditional work well and that there is broad and growing demand for it.

- When giving season arrives this year, many donors (including us) will want concrete recommendations for where to give. At this point, the best giving opportunities we know of are the ones identified by our traditional process, and continuing to follow this process is the best way we know of to find outstanding giving opportunities within a year (though we believe that GiveWell Labs is likely to generate better giving opportunities over a longer time horizon).

- The survey we recently conducted has lowered the weight that we place on a third potential consideration. We previously believed that “a sizable part of our audience values our traditional-criteria charities and does not value our cause-broadening work.”However, in going through our survey responses, we found that over 95% of respondents were interested in or open to (i.e., marked “1” or “2” for) at least one category of research that falls clearly outside our traditional work (we consider the first two categories on the survey to fall within our traditional “proven cost-effective” framework). Furthermore, over 90% of respondents were interested in or open to at least one category of research that would not be directly connected to evidence-backed interventions at all (i.e., would involve neither funding evidence-backed interventions nor funding the creation of better evidence). Even when looking at the four areas that we believe to be most controversial (the three political-advocacy-related areas and the “global catastrophic risks” area), ~70% of respondents expressed interest in or openness to these categories. These figures were broadly the same whether considering the entire set of survey respondents or subsets such as “donors” and “major donors.”

More information on the survey is available at the end of this post.

- Continuing to do charity updates (example) on our existing top charities.

- Reviewing any charity we come across that looks like it has a substantial chance of meeting our traditional criteria as well as, or better than, our current #1 charity (which would require not only that the charity itself has outstanding transparency, but also that the intervention it works on has an outstanding academic evidence base). We have created an application page for charities that believe they can meet these criteria.

- Hiring. As mentioned previously, we believe our process has reached a point where we ought to be able to hire, train and manage people to carry it out with substantially reduced involvement from senior staff. We are currently hiring for the Research Associate role, and if we could find strong Research Associates we would be able to be more thorough in our traditional work at little cost to GiveWell Labs.

We plan to execute on all three of these items. We do not plan, in 2013, to prioritize (a) looking more deeply into the academic case for our top charities’ interventions; (b) searching for, and investigating, charities that are likely to be outstanding giving opportunities but less outstanding than our current #1 charity; (c) investigating new ways of delivering proven cost-effective interventions, such as partnering with large organizations via restricted funding; (d) reviewing academic evidence for possibly proven cost-effective interventions that we have not found outstanding charities working on. All four of these items may become priorities again in the future, depending largely on our staff capacity.

Between the above priorities and other aspects of running our organization (administration, processing and tracking donations, outreach, etc.) we have significant work to do that doesn’t fall under the heading of GiveWell Labs research. However, we expect to be able to raise our allocation to GiveWell Labs, to the point where our staff overall puts more total research time into GiveWell Labs than into our traditional work.

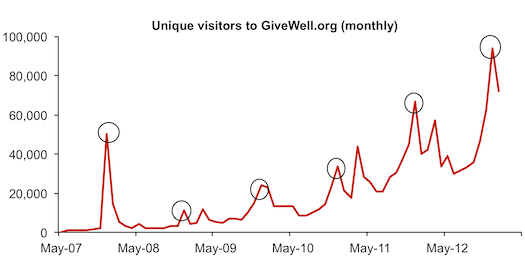

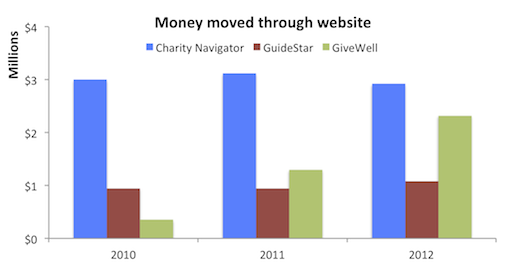

For similar reasons to those we laid out last year, we continue to prioritize expanding and maintaining our research above other priorities. We do not expect to put significant time into research vetting (see this recent post on the subject) or exploring new possibilities for outreach (though we will continue practices such as our conference calls and research meetings). We are continuing to see strong growth in money moved without prioritizing these items.

Survey details:

We publicly published our feedback survey and linked to it in a blog post and an email to our email list. We also emailed most people we had on record as having given $10,000 or more in a given year to our top charities, specifically asking them to complete the survey, if they hadn’t done so.

In analyzing the data, we looked at the results for all submissions (minus the ones we had identified as spam or solicitations); for people who had put their name and reported giving to our top charities in the past; for people who reported giving $10k+ to our top charities in the past, regardless of whether they put our name; and for people who we could verify (using their names) as past $10k+ donors. The results were broadly similar with each of these approaches.

We do not have permission to share individual entries, but for questions asking the user to provide a 1-5 ranking (which comprised the bulk of the survey), we provide the number of responses for each ranking, for each of the four categories discussed in the previous paragraph. These are available at our public survey data spreadsheet.